Attention (machine learning)

In artificial neural networks, attention is a technique that is meant to mimic cognitive attention. The effect enhances some parts of the input data while diminishing other parts — the motivation being that the network should devote more focus to the small, but important, parts of the data. Learning which part of the data is more important than another depends on the context, and this is trained by gradient descent.

| Part of a series on |

| Machine learning and data mining |

|---|

|

Attention-like mechanisms were introduced in the 1990s under names like multiplicative modules, sigma pi units, and hyper-networks.[1] Its flexibility comes from its role as "soft weights" that can change during runtime, in contrast to standard weights that must remain fixed at runtime. Uses of attention include memory in neural Turing machines, reasoning tasks in differentiable neural computers,[2] language processing in transformers, and LSTMs, and multi-sensory data processing (sound, images, video, and text) in perceivers. [3][4][5][6] Listed in the Variants section below are the many schemes to implement the soft-weight mechanisms.

General idea

Given a sequence of tokens labeled by the index , a neural network computes a soft weight for each with the property that is non-negative and . Each is assigned a value vector which is computed from the word embedding of the th token. The weighted average is the output of the attention mechanism.

The query-key mechanism computes the soft weights. From the word embedding of each token, it computes its corresponding query vector and key vector . The weights are obtained by taking the softmax function of the dot product where represents the current token and represents the token that's being attended to.

In some architectures, there are multiple "heads" of attention (termed 'multi-head attention'), each operating independently with their own queries, keys, and values.

A language translation example

To build a machine that translates English to French, one takes the basic Encoder-Decoder and grafts an attention unit to it (diagram below). In the simplest case, the attention unit consists of dot products of the recurrent encoder states and does not need training. In practice, the attention unit consists of 3 fully-connected neural network layers called query-key-value that need to be trained. See the Variants section below.

| Label | Description |

|---|---|

| 100 | Max. sentence length |

| 300 | Embedding size (word dimension) |

| 500 | Length of hidden vector |

| 9k, 10k | Dictionary size of input & output languages respectively. |

| x, Y | 9k and 10k 1-hot dictionary vectors. x → x implemented as a lookup table rather than vector multiplication. Y is the 1-hot maximizer of the linear Decoder layer D; that is, it takes the argmax of D's linear layer output. |

| x | 300-long word embedding vector. The vectors are usually pre-calculated from other projects such as GloVe or Word2Vec. |

| h | 500-long encoder hidden vector. At each point in time, this vector summarizes all the preceding words before it. The final h can be viewed as a "sentence" vector, or a thought vector as Hinton calls it. |

| s | 500-long decoder hidden state vector. |

| E | 500 neuron RNN encoder. 500 outputs. Input count is 800–300 from source embedding + 500 from recurrent connections. The encoder feeds directly into the decoder only to initialize it, but not thereafter; hence, that direct connection is shown very faintly. |

| D | 2-layer decoder. The recurrent layer has 500 neurons and the fully-connected linear layer has 10k neurons (the size of the target vocabulary).[7] The linear layer alone has 5 million (500 × 10k) weights -- ~10 times more weights than the recurrent layer. |

| score | 100-long alignment score |

| w | 100-long vector attention weight. These are "soft" weights which changes during the forward pass, in contrast to "hard" neuronal weights that change during the learning phase. |

| A | Attention module — this can be a dot product of recurrent states, or the query-key-value fully-connected layers. The output is a 100-long vector w. |

| H | 500×100. 100 hidden vectors h concatenated into a matrix |

| c | 500-long context vector = H * w. c is a linear combination of h vectors weighted by w. |

Viewed as a matrix, the attention weights show how the network adjusts its focus according to context.

| I | love | you | |

| je | 0.94 | 0.02 | 0.04 |

| t' | 0.11 | 0.01 | 0.88 |

| aime | 0.03 | 0.95 | 0.02 |

This view of the attention weights addresses the "explainability" problem that neural networks are criticized for. Networks that perform verbatim translation without regard to word order would have a diagonally dominant matrix if they were analyzable in these terms. The off-diagonal dominance shows that the attention mechanism is more nuanced. On the first pass through the decoder, 94% of the attention weight is on the first English word "I", so the network offers the word "je". On the second pass of the decoder, 88% of the attention weight is on the third English word "you", so it offers "t'". On the last pass, 95% of the attention weight is on the second English word "love", so it offers "aime".

Variants

There are many variants of attention that implements soft weights, including (a) Bahdanau Attention,[8] also referred to as additive attention, and (b) Luong Attention [9] which is known as multiplicative attention, built on top of additive attention, and (c) self-attention introduced in transformers. For convolutional neural networks, the attention mechanisms can also be distinguished by the dimension on which they operate, namely: spatial attention,[10] channel attention,[11] or combinations of both.[12][13]

These variants recombine the encoder-side inputs to redistribute those effects to each target output. Often, a correlation-style matrix of dot products provides the re-weighting coefficients (see legend).

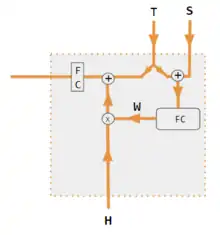

| 1. encoder-decoder dot product | 2. encoder-decoder QKV | 3. encoder-only dot product | 4. encoder-only QKV | 5. Pytorch tutorial |

|---|---|---|---|---|

Both encoder & decoder are needed to calculate attention.[9] |

Both encoder & decoder are needed to calculate attention.[14] |

Decoder is not used to calculate attention. With only 1 input into corr, W is an auto-correlation of dot products. wij = xi xj[15] |

Decoder is not used to calculate attention.[16] |

A fully-connected layer is used to calculate attention instead of dot product correlation.[17] |

| Label | Description |

|---|---|

| Variables X, H, S, T | Upper case variables represent the entire sentence, and not just the current word. For example, H is a matrix of the encoder hidden state—one word per column. |

| S, T | S, decoder hidden state; T, target word embedding. In the Pytorch Tutorial variant training phase, T alternates between 2 sources depending on the level of teacher forcing used. T could be the embedding of the network's output word; i.e. embedding(argmax(FC output)). Alternatively with teacher forcing, T could be the embedding of the known correct word which can occur with a constant forcing probability, say 1/2. |

| X, H | H, encoder hidden state; X, input word embeddings. |

| W | Attention coefficients |

| Qw, Kw, Vw, FC | Weight matrices for query, key, vector respectively. FC is a fully-connected weight matrix. |

| ⊕, ⊗ | ⊕, vector concatenation; ⊗, matrix multiplication. |

| corr | Column-wise softmax(matrix of all combinations of dot products). The dot products are xi* xj in variant #3, hi* sj in variant 1, and columni ( Kw* H )* column j ( Qw* S ) in variant 2, and column i (Kw* X)* column j (Qw* X) in variant 4. Variant 5 uses a fully-connected layer to determine the coefficients. If the variant is QKV, then the dot products are normalized by the sqrt(d) where d is the height of the QKV matrices. |

See also

- Transformer (machine learning model) § Scaled dot-product attention

- Perceiver § Components for query-key-value (QKV) attention

References

- Yann Lecun (2020). Deep Learning course at NYU, Spring 2020, video lecture Week 6. Event occurs at 53:00. Retrieved 2022-03-08.

- Graves, Alex; Wayne, Greg; Reynolds, Malcolm; Harley, Tim; Danihelka, Ivo; Grabska-Barwińska, Agnieszka; Colmenarejo, Sergio Gómez; Grefenstette, Edward; Ramalho, Tiago; Agapiou, John; Badia, Adrià Puigdomènech; Hermann, Karl Moritz; Zwols, Yori; Ostrovski, Georg; Cain, Adam; King, Helen; Summerfield, Christopher; Blunsom, Phil; Kavukcuoglu, Koray; Hassabis, Demis (2016-10-12). "Hybrid computing using a neural network with dynamic external memory". Nature. 538 (7626): 471–476. Bibcode:2016Natur.538..471G. doi:10.1038/nature20101. ISSN 1476-4687. PMID 27732574. S2CID 205251479.

- Vaswani, Ashish; Shazeer, Noam; Parmar, Niki; Uszkoreit, Jakob; Jones, Llion; Gomez, Aidan N.; Kaiser, Lukasz; Polosukhin, Illia (2017-12-05). "Attention Is All You Need". arXiv:1706.03762 [cs.CL].

- Ramachandran, Prajit; Parmar, Niki; Vaswani, Ashish; Bello, Irwan; Levskaya, Anselm; Shlens, Jonathon (2019-06-13). "Stand-Alone Self-Attention in Vision Models". arXiv:1906.05909 [cs.CV].

- Jaegle, Andrew; Gimeno, Felix; Brock, Andrew; Zisserman, Andrew; Vinyals, Oriol; Carreira, Joao (2021-06-22). "Perceiver: General Perception with Iterative Attention". arXiv:2103.03206 [cs.CV].

- Ray, Tiernan. "Google's Supermodel: DeepMind Perceiver is a step on the road to an AI machine that could process anything and everything". ZDNet. Retrieved 2021-08-19.

- "Pytorch.org seq2seq tutorial". Retrieved December 2, 2021.

- Bahdanau, Dzmitry (2016-05-19). "Neural Machine Translation by Jointly Learning to Align and Translate". arXiv:1409.0473 [cs.CL].

- Luong, Minh-Thang (2015-09-20). "Effective Approaches to Attention-based Neural Machine Translation". arXiv:1508.04025v5 [cs.CL].

- Zhu, Xizhou; Cheng, Dazhi; Zhang, Zheng; Lin, Stephen; Dai, Jifeng (2019). "An Empirical Study of Spatial Attention Mechanisms in Deep Networks". 2019 IEEE/CVF International Conference on Computer Vision (ICCV): 6687–6696. arXiv:1904.05873. doi:10.1109/ICCV.2019.00679. ISBN 978-1-7281-4803-8. S2CID 118673006.

- Hu, Jie; Shen, Li; Sun, Gang (2018). "Squeeze-and-Excitation Networks". IEEE/CVF Conference on Computer Vision and Pattern Recognition: 7132–7141. arXiv:1709.01507. doi:10.1109/CVPR.2018.00745. ISBN 978-1-5386-6420-9. S2CID 206597034.

- Woo, Sanghyun; Park, Jongchan; Lee, Joon-Young; Kweon, In So (2018-07-18). "CBAM: Convolutional Block Attention Module". arXiv:1807.06521 [cs.CV].

- Georgescu, Mariana-Iuliana; Ionescu, Radu Tudor; Miron, Andreea-Iuliana; Savencu, Olivian; Ristea, Nicolae-Catalin; Verga, Nicolae; Khan, Fahad Shahbaz (2022-10-12). "Multimodal Multi-Head Convolutional Attention with Various Kernel Sizes for Medical Image Super-Resolution". arXiv:2204.04218 [eess.IV].

- Neil Rhodes (2021). CS 152 NN—27: Attention: Keys, Queries, & Values. Event occurs at 06:30. Retrieved 2021-12-22.

- Alfredo Canziani & Yann Lecun (2021). NYU Deep Learning course, Spring 2020. Event occurs at 05:30. Retrieved 2021-12-22.

- Alfredo Canziani & Yann Lecun (2021). NYU Deep Learning course, Spring 2020. Event occurs at 20:15. Retrieved 2021-12-22.

- Robertson, Sean. "NLP From Scratch: Translation With a Sequence To Sequence Network and Attention". pytorch.org. Retrieved 2021-12-22.

External links

- Dan Jurafsky and James H. Martin (2022) Speech and Language Processing (3rd ed. draft, January 2022), ch. 10.4 Attention and ch. 9.7 Self-Attention Networks: Transformers

- Alex Graves (4 May 2020), Attention and Memory in Deep Learning (video lecture), DeepMind / UCL, via YouTube

- Rasa Algorithm Whiteboard - Attention via YouTube